This research agent project is an impressive demonstration of leveraging large language models (LLMs) and parallel processing techniques for efficient information synthesis. Here's a summary of key aspects:

-

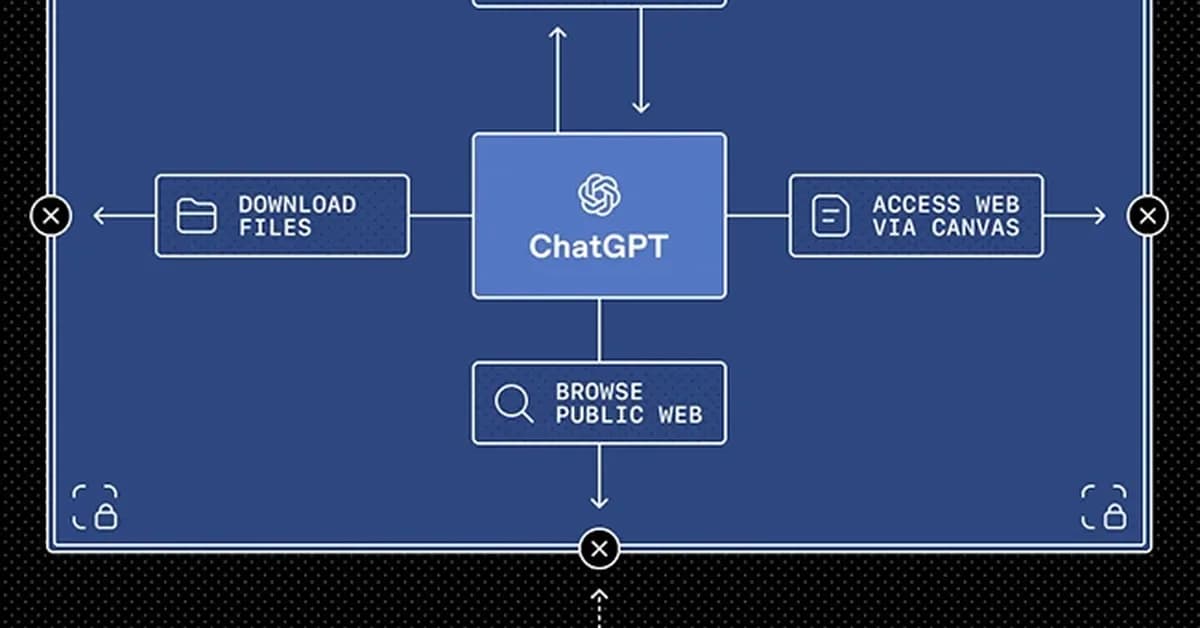

Architecture Overview:

- Uses LangChain and Streamlit to create an interactive web application.

- Employs threading and asynchronous calls to run multiple data retrieval tasks in parallel.

-

Parallel Processing:

- The core idea is to fetch information from various sources (GitHub, Stack Overflow, Reddit, etc.) simultaneously rather than sequentially.

- This significantly reduces the overall time required for research by minimizing wait times between API calls.

-

LLM Integration:

- Supports both local and cloud-based LLMs via environment variables.

- Uses Ollama or OpenAI APIs depending on configuration.

- Provides a seamless switch between different models (e.g., Groq, Gemini) without changing the codebase.

-

Agent Design:

- Defines agents for each data source with specific retrieval and summarization logic.

- Agents are designed to be modular and can be easily extended or modified.

-

Threading Challenges:

- Initially faced issues with

nonlocalvariables in threaded callbacks, which

- Initially faced issues with

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.