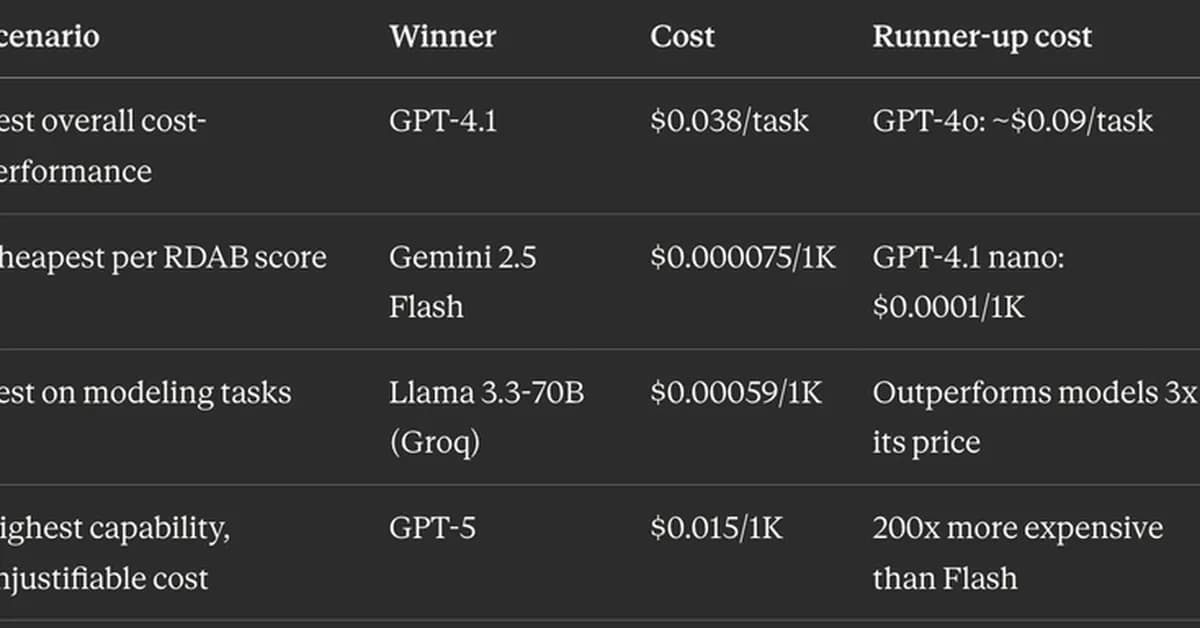

A new open-source tool called CostGuard has been developed to help teams optimize their choice of Large Language Models (LLMs) by providing cost-per-task estimates. The tool reveals significant variations in efficiency and cost across different models, highlighting that the cheapest model per token is not always the most economical for a given task. Developers now have a concrete way to justify or question the costs associated with LLMs, potentially saving thousands of dollars monthly on AWS bills.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The Unreasonable Effectiveness of Closing the Loop](/_next/image?url=https%3A%2F%2Fmedia.nemati.ai%2Fmedia%2Fblog%2Fimages%2Farticles%2F600e22851bc7453b.webp&w=3840&q=75)