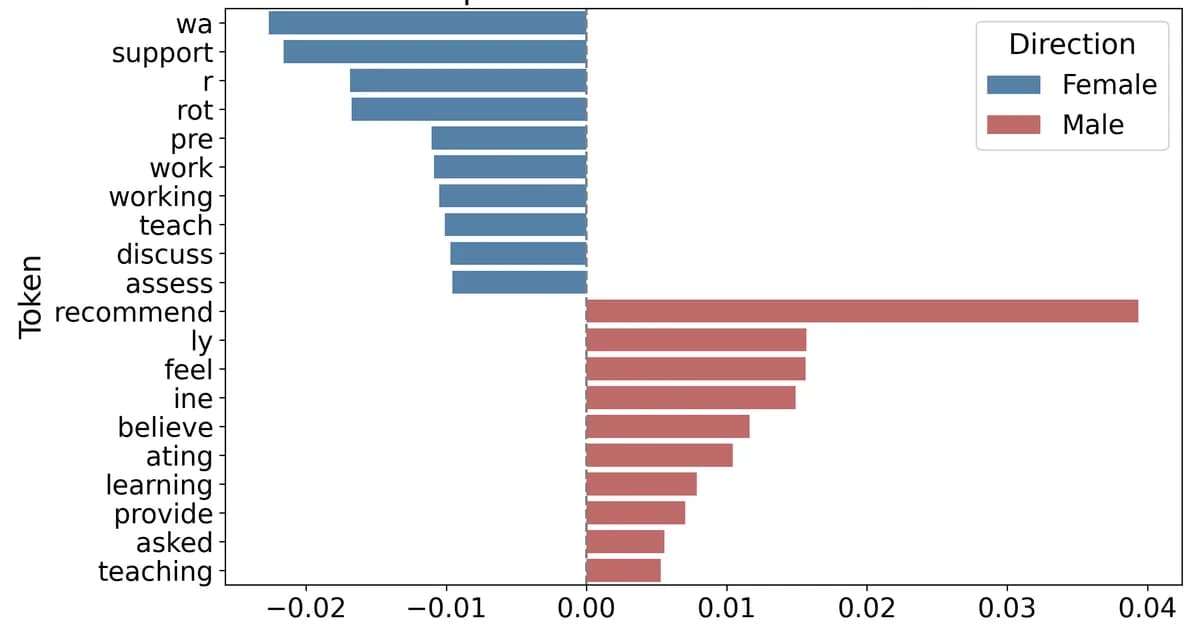

Researchers found that Transformer-based models and Large Language Models can infer the gender of applicants from de-gendered academic recommendation letters with up to 68% accuracy, revealing hidden gender cues like emotional language linked to female applicants. This study underscores the need for auditing evaluative text in real-world recommendation letters to mitigate bias in hiring and admissions decisions, highlighting the ongoing challenge of achieving true gender neutrality in such documents.

Read the full article at arXiv cs.LG (ML)

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The Unreasonable Effectiveness of Closing the Loop](/_next/image?url=https%3A%2F%2Fmedia.nemati.ai%2Fmedia%2Fblog%2Fimages%2Farticles%2F600e22851bc7453b.webp&w=3840&q=75)