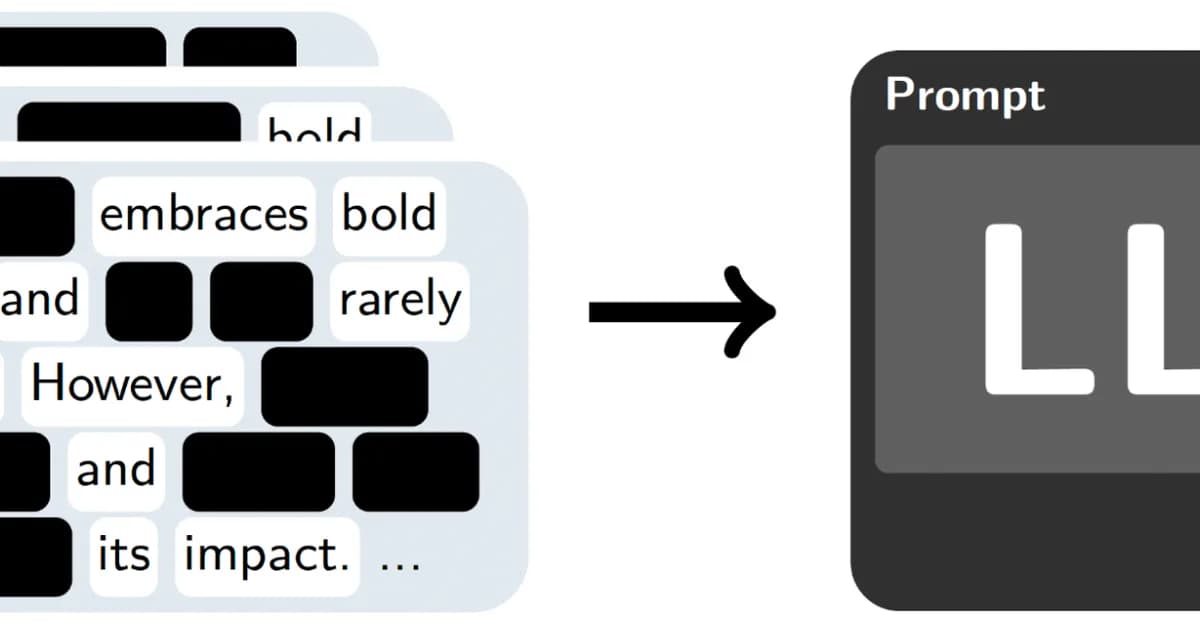

Researchers have developed SPEX and ProxySPEX, algorithms that identify influential interactions in large language models at scale, crucial for enhancing model interpretability. This advancement is vital as it enables developers to understand complex dependencies within AI systems, improving their reliability and trustworthiness. Next steps include integrating these methods across various stages of the machine learning lifecycle to provide a more comprehensive understanding of model behavior.

Read the full article at +?+?hub

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The Unreasonable Effectiveness of Closing the Loop](/_next/image?url=https%3A%2F%2Fmedia.nemati.ai%2Fmedia%2Fblog%2Fimages%2Farticles%2F600e22851bc7453b.webp&w=3840&q=75)