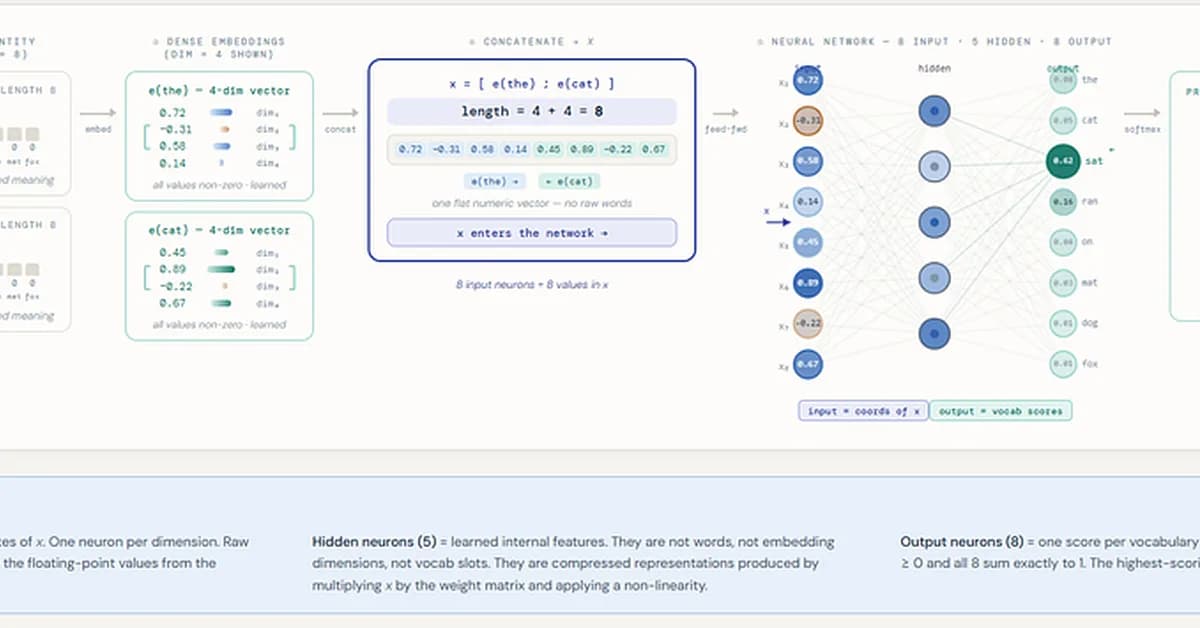

The article delves into the inner workings of early neural language models, explaining how they use embeddings and learned weights to predict the next word based on context. This matters because understanding these mechanisms demystifies the transition from n-grams to neural networks, highlighting the importance of embedding vectors in capturing contextual relationships between words.

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The Unreasonable Effectiveness of Closing the Loop](/_next/image?url=https%3A%2F%2Fmedia.nemati.ai%2Fmedia%2Fblog%2Fimages%2Farticles%2F600e22851bc7453b.webp&w=3840&q=75)