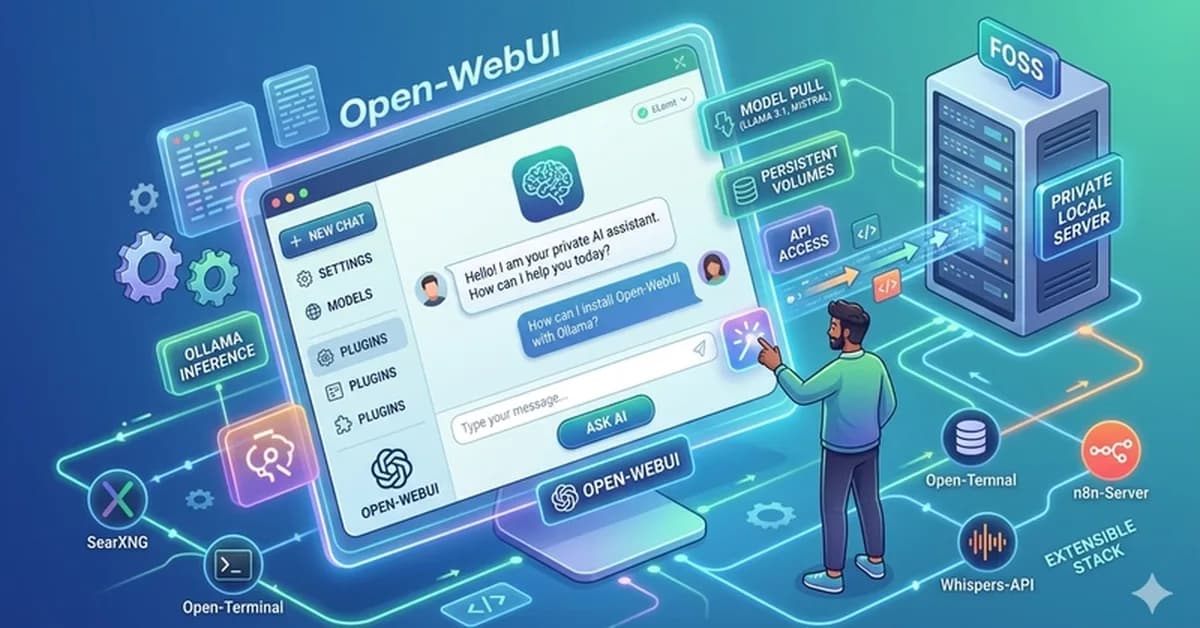

A guide explains how to set up Open-WebUI and Ollama using Docker for running large language models locally, offering benefits like absolute privacy and zero subscription costs. Developers can install Docker and use a single docker-compose.yml file to deploy both services seamlessly, ensuring data persistence and secure internal network routing.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.