The article discusses a significant security vulnerability in Retrieval-Augmented Generation (RAG) systems, which are becoming increasingly popular for their ability to enhance language models with external information. The author identifies that while there is extensive focus on securing user inputs and direct interactions with AI agents, the document layer — where documents fed into RAG systems reside — remains largely unprotected.

Key Points:

-

RAG Security Gap:

- Documents inputted into RAG systems can contain malicious instructions or payloads.

- Current security measures are inadequate for detecting such threats in the document layer.

-

Injection Attacks:

- Indirect injection attacks involve embedding harmful code or commands within legitimate documents, which when processed by a RAG system, could lead to unauthorized actions like data leakage or execution of harmful scripts.

-

Detection Challenges:

- Traditional security measures are insufficient for detecting such threats in the document layer.

- The need for pre-ingestion scanning similar to input validation practices adopted in web applications.

-

Solution: RAG Injection Scanner (RIS):

- A tool developed by the author to detect malicious payloads within documents before they enter a RAG system.

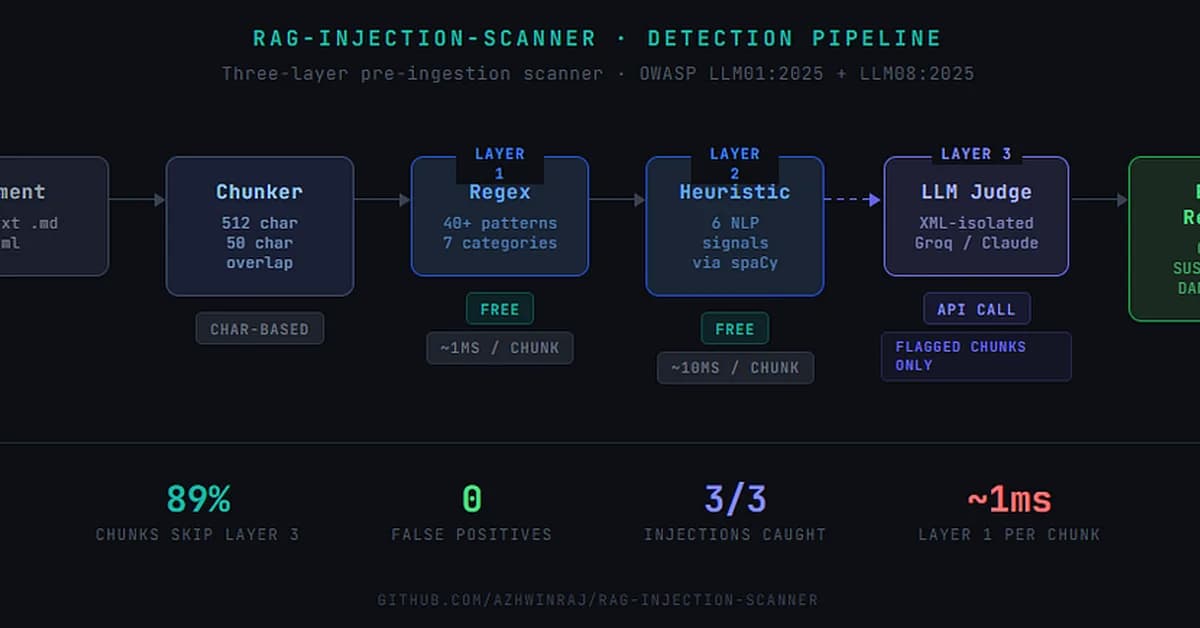

- Utilizes three layers of detection

Read the full article at Towards AI - Medium

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The Unreasonable Effectiveness of Closing the Loop](/_next/image?url=https%3A%2F%2Fmedia.nemati.ai%2Fmedia%2Fblog%2Fimages%2Farticles%2F600e22851bc7453b.webp&w=3840&q=75)