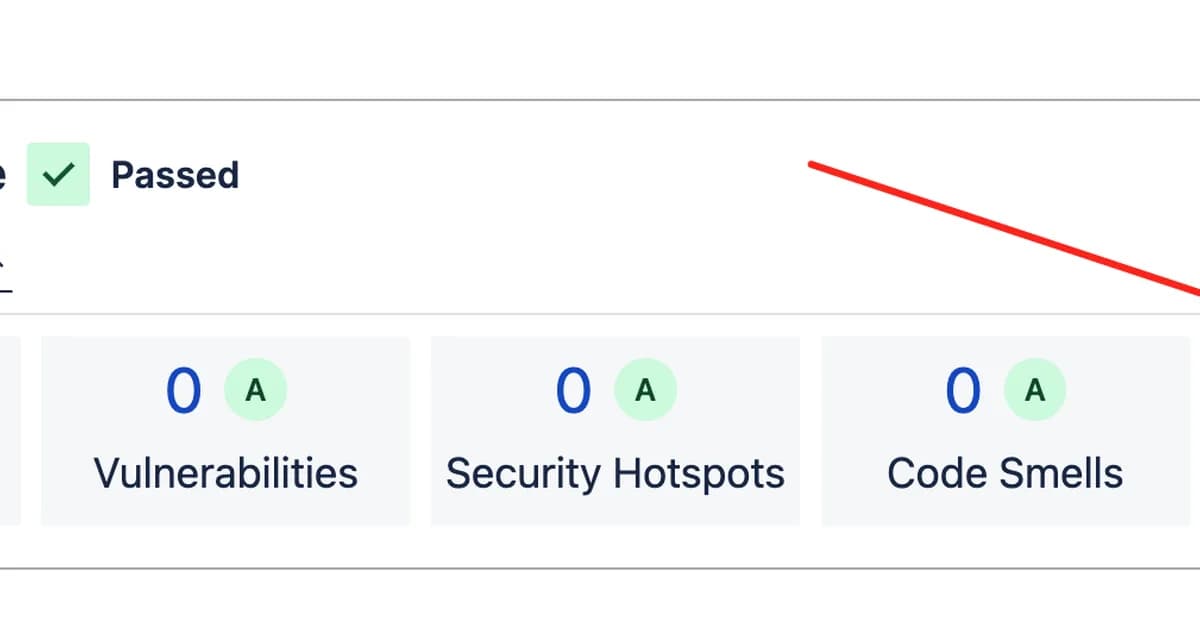

A quality verification pipeline designed to assess test effectiveness beyond mere coverage failed to detect weak tests due to a logical gap in its design. This matters because it highlights potential flaws in automated testing systems where "no changes" can incorrectly pass as completion, even when initial assessments flagged issues. To address this, the system now includes explicit instructions for AI agents and implements additional checks to ensure that identified problems are genuinely resolved before marking tasks complete.

Read the full article at DEV Community

Want to create content about this topic? Use Nemati AI tools to generate articles, social posts, and more.

![[AINews] The Unreasonable Effectiveness of Closing the Loop](/_next/image?url=https%3A%2F%2Fmedia.nemati.ai%2Fmedia%2Fblog%2Fimages%2Farticles%2F600e22851bc7453b.webp&w=3840&q=75)